Kubernetes 版本升级

注意事项:

- 升级注意,不能跨版本升级,比如:

1.19.x → 1.20.y——是可以的(其中y > x)1.19.x → 1.21.y——不可以【跨段了】(其中y > x)1.21.x→ 1.21.y——也可以(只要其中y > x)

所以,如果需要跨大版本升级,必须多次逐步升级 节点层面

1、先升级master【如果有多master,需要一台一台升级】

2、再升级worker【node】节点

软件层面

1、先升级kubeadm

2、把节点执行drain操作

3、升级各个组件【etcd,dns等】

4、取消drain操作

5、升级kubelet和kubectl

一.通过yum升级集群

Kubernetes v1.18.2 -> 1.19.16-0

1.k8s阿里云yum源

cat > /etc/yum.repos.d/kubernetes.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF2.查询可用版本

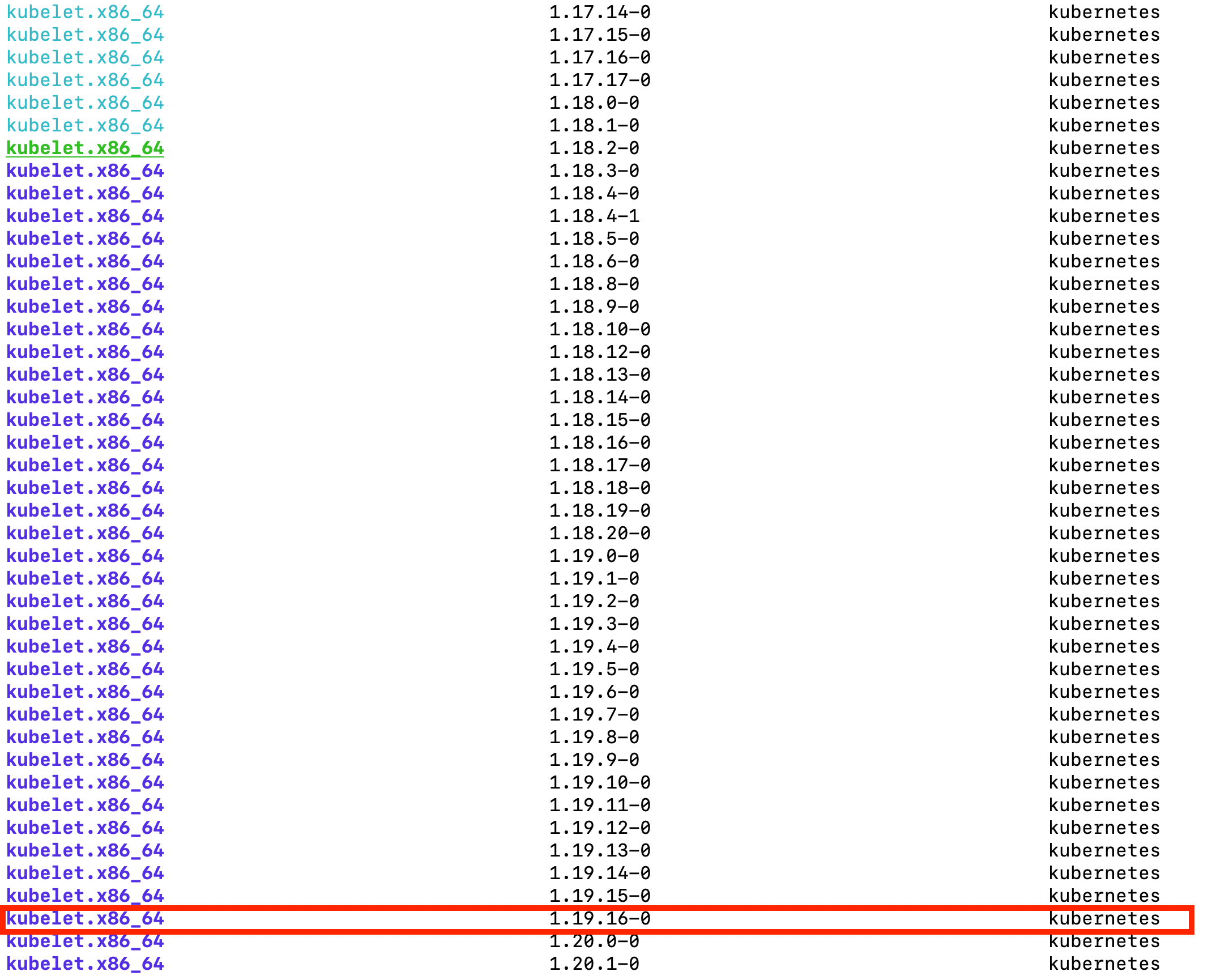

yum list --showduplicates kubeadm --disableexcludes=kubernetes通过以上命令查询到1.19当前最新版本是1.19.16-0版本。

3.升级k8s-master3节点控制平面

在k8s-master3节点执行以下命令

3.1yum升级kubernetes插件

yum install kubeadm-1.19.16-0 kubelet-1.19.16-0 kubectl-1.19.16-0 --disableexcludes=kubernetes3.2腾空节点检查集群是否可以升级

kubectl drain k8s-master3 --ignore-daemonsets --delete-local-data

kubeadm upgrade plan日志

[root@k8s-master3 ~]# kubectl drain k8s-master3 --ignore-daemonsets --delete-local-data

node/k8s-master3 already cordoned

WARNING: ignoring DaemonSet-managed Pods: ingress-nginx/nginx-ingress-controller-qkd2k, kube-system/calico-node-2zq9t, kube-system/kube-proxy-c4lct, kube-system/nginx-ingress-controller-rplcd, monitoring/node-exporter-c5pgt

evicting pod kube-system/kubernetes-dashboard-69dc58dcd9-6cnks

evicting pod kube-system/coredns-56c4df889b-ffws5

pod/kubernetes-dashboard-69dc58dcd9-6cnks evicted

pod/coredns-56c4df889b-ffws5 evicted

node/k8s-master3 evicted

[root@k8s-master3 ~]# kubeadm upgrade plan

[upgrade/config] Making sure the configuration is correct:

[upgrade/config] Reading configuration from the cluster...

[upgrade/config] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[preflight] Running pre-flight checks.

[upgrade] Running cluster health checks

[upgrade] Fetching available versions to upgrade to

[upgrade/versions] Cluster version: v1.18.2

[upgrade/versions] kubeadm version: v1.19.16

I0524 14:34:54.330620 8021 version.go:255] remote version is much newer: v1.24.0; falling back to: stable-1.19

[upgrade/versions] Latest stable version: v1.19.16

[upgrade/versions] Latest stable version: v1.19.16

[upgrade/versions] Latest version in the v1.18 series: v1.18.20

[upgrade/versions] Latest version in the v1.18 series: v1.18.20

Components that must be upgraded manually after you have upgraded the control plane with 'kubeadm upgrade apply':

COMPONENT CURRENT AVAILABLE

kubelet 6 x v1.18.2 v1.18.20

Upgrade to the latest version in the v1.18 series:

COMPONENT CURRENT AVAILABLE

kube-apiserver v1.18.2 v1.18.20

kube-controller-manager v1.18.2 v1.18.20

kube-scheduler v1.18.2 v1.18.20

kube-proxy v1.18.2 v1.18.20

CoreDNS 1.6.7 1.7.0

etcd 3.4.3-0 3.4.3-0

You can now apply the upgrade by executing the following command:

kubeadm upgrade apply v1.18.20

_____________________________________________________________________

Components that must be upgraded manually after you have upgraded the control plane with 'kubeadm upgrade apply':

COMPONENT CURRENT AVAILABLE

kubelet 6 x v1.18.2 v1.19.16

Upgrade to the latest stable version:

COMPONENT CURRENT AVAILABLE

kube-apiserver v1.18.2 v1.19.16

kube-controller-manager v1.18.2 v1.19.16

kube-scheduler v1.18.2 v1.19.16

kube-proxy v1.18.2 v1.19.16

CoreDNS 1.6.7 1.7.0

etcd 3.4.3-0 3.4.13-0

You can now apply the upgrade by executing the following command:

kubeadm upgrade apply v1.19.16

_____________________________________________________________________

The table below shows the current state of component configs as understood by this version of kubeadm.

Configs that have a "yes" mark in the "MANUAL UPGRADE REQUIRED" column require manual config upgrade or

resetting to kubeadm defaults before a successful upgrade can be performed. The version to manually

upgrade to is denoted in the "PREFERRED VERSION" column.

API GROUP CURRENT VERSION PREFERRED VERSION MANUAL UPGRADE REQUIRED

kubeproxy.config.k8s.io v1alpha1 v1alpha1 no

kubelet.config.k8s.io v1beta1 v1beta1 no

_____________________________________________________________________

3.2.1未升级kubeadm,查看集群升级

在k8s-master1上执行的

检查你的集群是否可被升级,并取回你要升级的目标版本

kubeadm upgrade plan发现只有v1.18系列的一个升级提示

[root@k8s-master1 yum.repos.d]# kubeadm upgrade plan

[upgrade/config] Making sure the configuration is correct:

[upgrade/config] Reading configuration from the cluster...

[upgrade/config] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[preflight] Running pre-flight checks.

[upgrade] Running cluster health checks

[upgrade] Fetching available versions to upgrade to

[upgrade/versions] Cluster version: v1.18.2

[upgrade/versions] kubeadm version: v1.18.2

I0524 14:12:18.421427 9944 version.go:252] remote version is much newer: v1.24.0; falling back to: stable-1.18

[upgrade/versions] Latest stable version: v1.18.20

[upgrade/versions] Latest stable version: v1.18.20

[upgrade/versions] Latest version in the v1.18 series: v1.18.20

[upgrade/versions] Latest version in the v1.18 series: v1.18.20

Components that must be upgraded manually after you have upgraded the control plane with 'kubeadm upgrade apply':

COMPONENT CURRENT AVAILABLE

Kubelet 6 x v1.18.2 v1.18.20

Upgrade to the latest version in the v1.18 series:

COMPONENT CURRENT AVAILABLE

API Server v1.18.2 v1.18.20

Controller Manager v1.18.2 v1.18.20

Scheduler v1.18.2 v1.18.20

Kube Proxy v1.18.2 v1.18.20

CoreDNS 1.6.7 1.6.7

Etcd 3.4.3 3.4.3-0

You can now apply the upgrade by executing the following command:

kubeadm upgrade apply v1.18.20

Note: Before you can perform this upgrade, you have to update kubeadm to v1.18.20.

_____________________________________________________________________

此时不可以升级到v1.19.16,如果执行升级v1.19.16,会报错

要升级到“v1.19.16”的指定版本至少比kubeadm次要版本高一个次要版本(19>18)。不支持此类升级

[root@k8s-master1 yum.repos.d]# kubeadm upgrade apply v1.19.16

[upgrade/config] Making sure the configuration is correct:

[upgrade/config] Reading configuration from the cluster...

[upgrade/config] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[preflight] Running pre-flight checks.

[upgrade] Running cluster health checks

[upgrade/version] You have chosen to change the cluster version to "v1.19.16"

[upgrade/versions] Cluster version: v1.18.2

[upgrade/versions] kubeadm version: v1.18.2

[upgrade/version] FATAL: the --version argument is invalid due to these fatal errors:

- Specified version to upgrade to "v1.19.16" is at least one minor release higher than the kubeadm minor release (19 > 18). Such an upgrade is not supported

Please fix the misalignments highlighted above and try upgrading again

To see the stack trace of this error execute with --v=5 or higher升级到v1.18.20,需要先升级kubeadm到指定版本

-要升级到“v1.18.20”的指定版本高于kubeadm版本“v1.18.2”。首先使用用于安装kubeadm的工具升级kubeadm

[root@k8s-master1 yum.repos.d]# kubeadm upgrade apply v1.18.20

[upgrade/config] Making sure the configuration is correct:

[upgrade/config] Reading configuration from the cluster...

[upgrade/config] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[preflight] Running pre-flight checks.

[upgrade] Running cluster health checks

[upgrade/version] You have chosen to change the cluster version to "v1.18.20"

[upgrade/versions] Cluster version: v1.18.2

[upgrade/versions] kubeadm version: v1.18.2

[upgrade/version] FATAL: the --version argument is invalid due to these errors:

- Specified version to upgrade to "v1.18.20" is higher than the kubeadm version "v1.18.2". Upgrade kubeadm first using the tool you used to install kubeadm

Can be bypassed if you pass the --force flag

To see the stack trace of this error execute with --v=5 or higher安装1.18.20版本的相关kubeadm,kubelet,kubectl

yum install kubeadm-1.18.20-0 kubelet-1.18.20-0 kubectl-1.18.20-0 --disableexcludes=kuberneteskubeadm v1.19.16切换到v1.18.20版本

当前安装版本v1.19.16版本过高,需要安装v1.18.20版本,但是无法直接安装低版本

[root@k8s-master3 ~]# kubeadm version

kubeadm version: &version.Info{Major:"1", Minor:"19", GitVersion:"v1.19.16", GitCommit:"e37e4ab4cc8dcda84f1344dda47a97bb1927d074", GitTreeState:"clean", BuildDate:"2021-10-27T16:24:44Z", GoVersion:"go1.15.15", Compiler:"gc", Platform:"linux/amd64"}

[root@k8s-master3 ~]# yum install kubeadm-1.18.20-0 kubelet-1.18.20-0 kubectl-1.18.20-0 --disableexcludes=kubernetes

已加载插件:fastestmirror

Loading mirror speeds from cached hostfile

* base: mirrors.aliyun.com

* extras: mirrors.aliyun.com

* updates: mirrors.aliyun.com

匹配 kubeadm-1.18.20-0.x86_64 的软件包已经安装。正在检查更新。

匹配 kubelet-1.18.20-0.x86_64 的软件包已经安装。正在检查更新。

匹配 kubectl-1.18.20-0.x86_64 的软件包已经安装。正在检查更新。

无须任何处理

先卸载当前版本

yum remove kubeadm-1.19.16-0 kubelet-1.19.16-0 kubectl-1.19.16-0查找当前所有可用版本

yum list --showduplicates kubeadm --disableexcludes=kubernetes下载对应版本即可

yum install kubeadm-1.18.20-0 kubelet-1.18.20-0 kubectl-1.18.20-0 --disableexcludes=kubernetes3.3升级版本到1.19.16

kubeadm upgrade apply v1.19.16注意:特意强调一下work节点的版本也都是1.18.2了,没有出现跨更多版本的状况了

获取镜像失败

[root@k8s-master3 ~]# kubeadm upgrade apply v1.19.16

[upgrade/config] Making sure the configuration is correct:

[upgrade/config] Reading configuration from the cluster...

[upgrade/config] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[preflight] Running pre-flight checks.

[upgrade] Running cluster health checks

[upgrade/version] You have chosen to change the cluster version to "v1.19.16"

[upgrade/versions] Cluster version: v1.18.2

[upgrade/versions] kubeadm version: v1.19.16

[upgrade/confirm] Are you sure you want to proceed with the upgrade? [y/N]: y

[upgrade/prepull] Pulling images required for setting up a Kubernetes cluster

[upgrade/prepull] This might take a minute or two, depending on the speed of your internet connection

[upgrade/prepull] You can also perform this action in beforehand using 'kubeadm config images pull'

[preflight] Some fatal errors occurred:

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-apiserver:v1.19.16: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-controller-manager:v1.19.16: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-scheduler:v1.19.16: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-proxy:v1.19.16: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/etcd:3.4.13-0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/coredns:1.7.0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

, error: exit status 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher

由于国内无法访问k8s.gcr.io镜像,直接使用命令kubeadm upgrade apply v1.19.16 更新会拉取镜像失败。因此我们需要使用阿里云镜像服务器registry.aliyuncs.com/google_containers拉取1.19.16版本。

kubeadm config images pull --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.19.16[root@k8s-master3 ~]# kubeadm config images pull --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.19.16

W0524 14:57:23.044672 23170 configset.go:348] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-apiserver:v1.19.16

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-controller-manager:v1.19.16

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-scheduler:v1.19.16

[config/images] Pulled registry.aliyuncs.com/google_containers/kube-proxy:v1.19.16

[config/images] Pulled registry.aliyuncs.com/google_containers/pause:3.2

[config/images] Pulled registry.aliyuncs.com/google_containers/etcd:3.4.13-0

[config/images] Pulled registry.aliyuncs.com/google_containers/coredns:1.7.0修改tag

如:registry.aliyuncs.com/google_containers/kube-apiserver:v1.19.16 → k8s.gcr.io/kube-apiserver:v1.19.16

注意⚠️:该命令仅限所有registry.aliyuncs.com相关镜像为刚才所下载的全部镜像使用,否则,请自行更改或者手动给镜像打tag。

for i in $(docker images |grep registry.aliyuncs.com|awk '{print $1":"$2}');do docker tag ${i} k8s.gcr.io/${i##*/};done再次更新,成功

[root@k8s-master3 ~]# kubeadm upgrade apply v1.19.16

[upgrade/config] Making sure the configuration is correct:

[upgrade/config] Reading configuration from the cluster...

[upgrade/config] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[preflight] Running pre-flight checks.

[upgrade] Running cluster health checks

[upgrade/version] You have chosen to change the cluster version to "v1.19.16"

[upgrade/versions] Cluster version: v1.18.2

[upgrade/versions] kubeadm version: v1.19.16

[upgrade/confirm] Are you sure you want to proceed with the upgrade? [y/N]: y

[upgrade/prepull] Pulling images required for setting up a Kubernetes cluster

[upgrade/prepull] This might take a minute or two, depending on the speed of your internet connection

[upgrade/prepull] You can also perform this action in beforehand using 'kubeadm config images pull'

[upgrade/apply] Upgrading your Static Pod-hosted control plane to version "v1.19.16"...

Static pod: kube-apiserver-k8s-master3 hash: 1c4fb345ed428e55f3069e2f6fff4025

Static pod: kube-controller-manager-k8s-master3 hash: 968f91dd7c47db1906dd7a4473b3c10a

Static pod: kube-scheduler-k8s-master3 hash: d43b0b00305163e674f0971f103dad48

[upgrade/etcd] Upgrading to TLS for etcd

Static pod: etcd-k8s-master3 hash: 52ba9fdb374c576821481e627ad9c0da

[upgrade/staticpods] Preparing for "etcd" upgrade

[upgrade/staticpods] Renewing etcd-server certificate

[upgrade/staticpods] Renewing etcd-peer certificate

[upgrade/staticpods] Renewing etcd-healthcheck-client certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/etcd.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2022-05-24-15-04-25/etcd.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This might take a minute or longer depending on the component/version gap (timeout 5m0s)

Static pod: etcd-k8s-master3 hash: 52ba9fdb374c576821481e627ad9c0da

Static pod: etcd-k8s-master3 hash: 52ba9fdb374c576821481e627ad9c0da

Static pod: etcd-k8s-master3 hash: 52ba9fdb374c576821481e627ad9c0da

Static pod: etcd-k8s-master3 hash: 52ba9fdb374c576821481e627ad9c0da

Static pod: etcd-k8s-master3 hash: f29da30096b3d1ef5a64d24035c00590

[apiclient] Found 3 Pods for label selector component=etcd

[upgrade/staticpods] Component "etcd" upgraded successfully!

[upgrade/etcd] Waiting for etcd to become available

[upgrade/staticpods] Writing new Static Pod manifests to "/etc/kubernetes/tmp/kubeadm-upgraded-manifests043748105"

[upgrade/staticpods] Preparing for "kube-apiserver" upgrade

[upgrade/staticpods] Renewing apiserver certificate

[upgrade/staticpods] Renewing apiserver-kubelet-client certificate

[upgrade/staticpods] Renewing front-proxy-client certificate

[upgrade/staticpods] Renewing apiserver-etcd-client certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-apiserver.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2022-05-24-15-04-25/kube-apiserver.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This might take a minute or longer depending on the component/version gap (timeout 5m0s)

Static pod: kube-apiserver-k8s-master3 hash: 1c4fb345ed428e55f3069e2f6fff4025

Static pod: kube-apiserver-k8s-master3 hash: 1c4fb345ed428e55f3069e2f6fff4025

Static pod: kube-apiserver-k8s-master3 hash: 90e9fe1cac2cbd1a060d026a454e1f9c

[apiclient] Found 3 Pods for label selector component=kube-apiserver

[upgrade/staticpods] Component "kube-apiserver" upgraded successfully!

[upgrade/staticpods] Preparing for "kube-controller-manager" upgrade

[upgrade/staticpods] Renewing controller-manager.conf certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-controller-manager.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2022-05-24-15-04-25/kube-controller-manager.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This might take a minute or longer depending on the component/version gap (timeout 5m0s)

Static pod: kube-controller-manager-k8s-master3 hash: 968f91dd7c47db1906dd7a4473b3c10a

Static pod: kube-controller-manager-k8s-master3 hash: 563c39d0d860b8b3811aa5fccbed7eef

[apiclient] Found 3 Pods for label selector component=kube-controller-manager

[upgrade/staticpods] Component "kube-controller-manager" upgraded successfully!

[upgrade/staticpods] Preparing for "kube-scheduler" upgrade

[upgrade/staticpods] Renewing scheduler.conf certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-scheduler.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2022-05-24-15-04-25/kube-scheduler.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This might take a minute or longer depending on the component/version gap (timeout 5m0s)

Static pod: kube-scheduler-k8s-master3 hash: d43b0b00305163e674f0971f103dad48

Static pod: kube-scheduler-k8s-master3 hash: b06246adee2a0b516af2a39b081397fd

[apiclient] Found 3 Pods for label selector component=kube-scheduler

[upgrade/staticpods] Component "kube-scheduler" upgraded successfully!

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.19" in namespace kube-system with the configuration for the kubelets in the cluster

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[addons] Applied essential addon: CoreDNS

[endpoint] WARNING: port specified in controlPlaneEndpoint overrides bindPort in the controlplane address

[addons] Applied essential addon: kube-proxy

[upgrade/successful] SUCCESS! Your cluster was upgraded to "v1.19.16". Enjoy!

[upgrade/kubelet] Now that your control plane is upgraded, please proceed with upgrading your kubelets if you haven't already done so.

3.4重启kubelet服务

[root@k8s-master3 ~]# systemctl daemon-reload

[root@k8s-master3 ~]# systemctl restart kubelet

[root@k8s-master3 ~]# kubectl uncordon k8s-master33.5查看nodes,已经更新

[root@k8s-master3 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 63d v1.18.2

k8s-master2 Ready master 63d v1.18.2

k8s-master3 Ready master 53d v1.19.16

k8s-node1 Ready <none> 63d v1.18.2

k8s-node2 Ready <none> 63d v1.18.2

k8s-node3 Ready <none> 63d v1.18.24. 升级其他控制平面(k8s-master1 k8s-master2)

添加yum源

cat > /etc/yum.repos.d/kubernetes.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF导入镜像

# k8s-master03上导出镜像

docker save k8s.gcr.io/kube-controller-manager:v1.19.16 k8s.gcr.io/kube-apiserver:v1.19.16 k8s.gcr.io/kube-proxy:v1.19.16 k8s.gcr.io/kube-scheduler:v1.19.16 k8s.gcr.io/etcd:3.4.13-0 k8s.gcr.io/coredns:1.7.0 k8s.gcr.io/pause:3.2 > /tmp/k8s_v1.19.16.tar

# 启动下载服务

cd /tmp

python -m SimpleHTTPServer 8888

# k8s-master01,k8s-master02,下载镜像并导入

cd /tmp

wget 192.168.0.153:8888/k8s_v1.19.16.tar

docker load -i k8s_v1.19.16.tar更新

yum install kubeadm-1.19.16-0 kubelet-1.19.16-0 kubectl-1.19.16-0 --disableexcludes=kubernetes

kubeadm upgrade node

systemctl daemon-reload

systemctl restart kubelet日志

[root@k8s-master1 yum.repos.d]# yum install kubeadm-1.19.16-0 kubelet-1.19.16-0 kubectl-1.19.16-0 --disableexcludes=kubernetes

已加载插件:fastestmirror

Loading mirror speeds from cached hostfile

* base: mirrors.aliyun.com

* epel: hkg.mirror.rackspace.com

* extras: mirrors.aliyun.com

* updates: mirrors.aliyun.com

kubernetes | 1.4 kB 00:00:00

正在解决依赖关系

--> 正在检查事务

---> 软件包 kubeadm.x86_64.0.1.18.2-0 将被 升级

---> 软件包 kubeadm.x86_64.0.1.19.16-0 将被 更新

--> 正在处理依赖关系 kubernetes-cni >= 0.8.6,它被软件包 kubeadm-1.19.16-0.x86_64 需要

--> 正在处理依赖关系 cri-tools >= 1.19.0,它被软件包 kubeadm-1.19.16-0.x86_64 需要

---> 软件包 kubectl.x86_64.0.1.18.2-0 将被 升级

---> 软件包 kubectl.x86_64.0.1.19.16-0 将被 更新

---> 软件包 kubelet.x86_64.0.1.18.2-0 将被 升级

---> 软件包 kubelet.x86_64.0.1.19.16-0 将被 更新

--> 正在检查事务

---> 软件包 cri-tools.x86_64.0.1.13.0-0 将被 升级

---> 软件包 cri-tools.x86_64.0.1.23.0-0 将被 更新

---> 软件包 kubernetes-cni.x86_64.0.0.7.5-0 将被 升级

---> 软件包 kubernetes-cni.x86_64.0.0.8.7-0 将被 更新

--> 解决依赖关系完成

依赖关系解决

======================================================================================================

Package 架构 版本 源 大小

======================================================================================================

正在更新:

kubeadm x86_64 1.19.16-0 kubernetes 8.3 M

kubectl x86_64 1.19.16-0 kubernetes 9.0 M

kubelet x86_64 1.19.16-0 kubernetes 20 M

为依赖而更新:

cri-tools x86_64 1.23.0-0 kubernetes 7.1 M

kubernetes-cni x86_64 0.8.7-0 kubernetes 19 M

事务概要

======================================================================================================

升级 3 软件包 (+2 依赖软件包)

总下载量:63 M

Is this ok [y/d/N]: y

Downloading packages:

Delta RPMs disabled because /usr/bin/applydeltarpm not installed.

(1/5): 4d300a7655f56307d35f127d99dc192b6aa4997f322234e754f16aaa60fd8906-cri-to | 7.1 MB 00:00:23

(2/5): 6e8fc5b12b06a19517237776f07bf8f171fbfec1e0345232ea945264d84790c3-kubead | 8.3 MB 00:00:29

(3/5): 42fa87f24a94214337b896649135fc72dfcd087f6160ec99ae827f877fc20e94-kubect | 9.0 MB 00:00:30

(4/5): 40cf354f26e340ee89801cedfa4333388aa5ff8895ba5dc1645b95a4b56e9c2a-kubele | 20 MB 00:01:09

(5/5): db7cb5cb0b3f6875f54d10f02e625573988e3e91fd4fc5eef0b1876bb18604ad-kubern | 19 MB 00:01:02

------------------------------------------------------------------------------------------------------

总计 552 kB/s | 63 MB 00:01:56

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

正在更新 : kubernetes-cni-0.8.7-0.x86_64 1/10

正在更新 : kubelet-1.19.16-0.x86_64 2/10

正在更新 : cri-tools-1.23.0-0.x86_64 3/10

正在更新 : kubectl-1.19.16-0.x86_64 4/10

正在更新 : kubeadm-1.19.16-0.x86_64 5/10

清理 : kubeadm-1.18.2-0.x86_64 6/10

清理 : kubernetes-cni-0.7.5-0.x86_64 7/10

清理 : kubelet-1.18.2-0.x86_64 8/10

清理 : cri-tools-1.13.0-0.x86_64 9/10

清理 : kubectl-1.18.2-0.x86_64 10/10

验证中 : kubelet-1.19.16-0.x86_64 1/10

验证中 : kubernetes-cni-0.8.7-0.x86_64 2/10

验证中 : kubeadm-1.19.16-0.x86_64 3/10

验证中 : kubectl-1.19.16-0.x86_64 4/10

验证中 : cri-tools-1.23.0-0.x86_64 5/10

验证中 : kubeadm-1.18.2-0.x86_64 6/10

验证中 : kubelet-1.18.2-0.x86_64 7/10

验证中 : cri-tools-1.13.0-0.x86_64 8/10

验证中 : kubernetes-cni-0.7.5-0.x86_64 9/10

验证中 : kubectl-1.18.2-0.x86_64 10/10

更新完毕:

kubeadm.x86_64 0:1.19.16-0 kubectl.x86_64 0:1.19.16-0 kubelet.x86_64 0:1.19.16-0

作为依赖被升级:

cri-tools.x86_64 0:1.23.0-0 kubernetes-cni.x86_64 0:0.8.7-0

完毕!

[root@k8s-master1 yum.repos.d]# kubeadm upgrade node

[upgrade] Reading configuration from the cluster...

[upgrade] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

unable to fetch the kubeadm-config ConfigMap: failed to get node registration: failed to get corresponding node: Get "https://k8s-vip:16443/api/v1/nodes/k8s-master1?timeout=10s": net/http: request canceled (Client.Timeout exceeded while awaiting headers)

To see the stack trace of this error execute with --v=5 or higher

[root@k8s-master1 yum.repos.d]# kubeadm upgrade node

[upgrade] Reading configuration from the cluster...

[upgrade] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[upgrade] Upgrading your Static Pod-hosted control plane instance to version "v1.19.16"...

Static pod: kube-apiserver-k8s-master1 hash: 2bac5c78665c637df9dc5cd7d0f0087c

Static pod: kube-controller-manager-k8s-master1 hash: bde38af668115eac9d0a0ed7d36ade15

Static pod: kube-scheduler-k8s-master1 hash: d43b0b00305163e674f0971f103dad48

[upgrade/etcd] Upgrading to TLS for etcd

Static pod: etcd-k8s-master1 hash: f1e1b18ad1875e1fc3d85054d444c19b

[upgrade/staticpods] Preparing for "etcd" upgrade

[upgrade/staticpods] Renewing etcd-server certificate

[upgrade/staticpods] Renewing etcd-peer certificate

[upgrade/staticpods] Renewing etcd-healthcheck-client certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/etcd.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2022-05-24-15-16-49/etcd.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This might take a minute or longer depending on the component/version gap (timeout 5m0s)

Static pod: etcd-k8s-master1 hash: f1e1b18ad1875e1fc3d85054d444c19b

Static pod: etcd-k8s-master1 hash: f1e1b18ad1875e1fc3d85054d444c19b

Static pod: etcd-k8s-master1 hash: f1e1b18ad1875e1fc3d85054d444c19b

Static pod: etcd-k8s-master1 hash: f1e1b18ad1875e1fc3d85054d444c19b

Static pod: etcd-k8s-master1 hash: 9f6555b7223d6a6923f608cab0737423

[apiclient] Found 3 Pods for label selector component=etcd

[upgrade/staticpods] Component "etcd" upgraded successfully!

[upgrade/etcd] Waiting for etcd to become available

[upgrade/staticpods] Writing new Static Pod manifests to "/etc/kubernetes/tmp/kubeadm-upgraded-manifests203435992"

[upgrade/staticpods] Preparing for "kube-apiserver" upgrade

[upgrade/staticpods] Renewing apiserver certificate

[upgrade/staticpods] Renewing apiserver-kubelet-client certificate

[upgrade/staticpods] Renewing front-proxy-client certificate

[upgrade/staticpods] Renewing apiserver-etcd-client certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-apiserver.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2022-05-24-15-16-49/kube-apiserver.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This might take a minute or longer depending on the component/version gap (timeout 5m0s)

Static pod: kube-apiserver-k8s-master1 hash: 2bac5c78665c637df9dc5cd7d0f0087c

Static pod: kube-apiserver-k8s-master1 hash: 87786aa17a8c2ca4bac1b7450205ecb1

[apiclient] Found 3 Pods for label selector component=kube-apiserver

[upgrade/staticpods] Component "kube-apiserver" upgraded successfully!

[upgrade/staticpods] Preparing for "kube-controller-manager" upgrade

[upgrade/staticpods] Renewing controller-manager.conf certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-controller-manager.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2022-05-24-15-16-49/kube-controller-manager.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This might take a minute or longer depending on the component/version gap (timeout 5m0s)

Static pod: kube-controller-manager-k8s-master1 hash: bde38af668115eac9d0a0ed7d36ade15

Static pod: kube-controller-manager-k8s-master1 hash: 563c39d0d860b8b3811aa5fccbed7eef

[apiclient] Found 3 Pods for label selector component=kube-controller-manager

[upgrade/staticpods] Component "kube-controller-manager" upgraded successfully!

[upgrade/staticpods] Preparing for "kube-scheduler" upgrade

[upgrade/staticpods] Renewing scheduler.conf certificate

[upgrade/staticpods] Moved new manifest to "/etc/kubernetes/manifests/kube-scheduler.yaml" and backed up old manifest to "/etc/kubernetes/tmp/kubeadm-backup-manifests-2022-05-24-15-16-49/kube-scheduler.yaml"

[upgrade/staticpods] Waiting for the kubelet to restart the component

[upgrade/staticpods] This might take a minute or longer depending on the component/version gap (timeout 5m0s)

Static pod: kube-scheduler-k8s-master1 hash: d43b0b00305163e674f0971f103dad48

Static pod: kube-scheduler-k8s-master1 hash: b06246adee2a0b516af2a39b081397fd

[apiclient] Found 3 Pods for label selector component=kube-scheduler

[upgrade/staticpods] Component "kube-scheduler" upgraded successfully!

[upgrade] The control plane instance for this node was successfully updated!

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[upgrade] The configuration for this node was successfully updated!

[upgrade] Now you should go ahead and upgrade the kubelet package using your package manager.5.work节点的升级

添加yum源

cat > /etc/yum.repos.d/kubernetes.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF更新

yum install kubeadm-1.19.16-0 kubelet-1.19.16-0 kubectl-1.19.16-0 --disableexcludes=kubernetes

kubeadm upgrade node

systemctl daemon-reload

systemctl restart kubelet日志

[root@k8s-node2 ~]# cat > /etc/yum.repos.d/kubernetes.repo <<EOF

> [kubernetes]

> name=Kubernetes

> baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

> enabled=1

> gpgcheck=0

> repo_gpgcheck=0

> gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

> http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

> EOF

[root@k8s-node2 ~]# yum install kubeadm-1.19.16-0 kubelet-1.19.16-0 kubectl-1.19.16-0 --disableexcludes=kubernetes

已加载插件:fastestmirror

Determining fastest mirrors

epel/x86_64/metalink | 6.3 kB 00:00:00

* base: mirrors.aliyun.com

* epel: hkg.mirror.rackspace.com

* extras: mirrors.aliyun.com

* updates: mirrors.aliyun.com

base | 3.6 kB 00:00:00

epel | 4.7 kB 00:00:00

extras | 2.9 kB 00:00:00

kubernetes | 1.4 kB 00:00:00

http://mirrors.cloud.aliyuncs.com/centos/7/updates/x86_64/repodata/repomd.xml: [Errno 14] curl#6 - "Could not resolve host: mirrors.cloud.aliyuncs.com; 未知的错误"

正在尝试其它镜像。

http://mirrors.aliyuncs.com/centos/7/updates/x86_64/repodata/repomd.xml: [Errno 12] Timeout on http://mirrors.aliyuncs.com/centos/7/updates/x86_64/repodata/repomd.xml: (28, 'Connection timed out after 30001 milliseconds')

正在尝试其它镜像。

updates | 2.9 kB 00:00:00

(1/5): kubernetes/primary | 108 kB 00:00:00

(2/5): epel/x86_64/updateinfo | 1.0 MB 00:00:01

(3/5): extras/7/x86_64/primary_db | 247 kB 00:00:01

(4/5): epel/x86_64/primary_db | 7.0 MB 00:00:44

(5/5): updates/7/x86_64/primary_db | 16 MB 00:00:59

kubernetes 797/797

正在解决依赖关系

--> 正在检查事务

---> 软件包 kubeadm.x86_64.0.1.18.2-0 将被 升级

---> 软件包 kubeadm.x86_64.0.1.19.16-0 将被 更新

--> 正在处理依赖关系 kubernetes-cni >= 0.8.6,它被软件包 kubeadm-1.19.16-0.x86_64 需要

--> 正在处理依赖关系 cri-tools >= 1.19.0,它被软件包 kubeadm-1.19.16-0.x86_64 需要

---> 软件包 kubectl.x86_64.0.1.18.2-0 将被 升级

---> 软件包 kubectl.x86_64.0.1.19.16-0 将被 更新

---> 软件包 kubelet.x86_64.0.1.18.2-0 将被 升级

---> 软件包 kubelet.x86_64.0.1.19.16-0 将被 更新

--> 正在检查事务

---> 软件包 cri-tools.x86_64.0.1.13.0-0 将被 升级

---> 软件包 cri-tools.x86_64.0.1.23.0-0 将被 更新

---> 软件包 kubernetes-cni.x86_64.0.0.7.5-0 将被 升级

---> 软件包 kubernetes-cni.x86_64.0.0.8.7-0 将被 更新

--> 解决依赖关系完成

依赖关系解决

===============================================================================================================================================

Package 架构 版本 源 大小

===============================================================================================================================================

正在更新:

kubeadm x86_64 1.19.16-0 kubernetes 8.3 M

kubectl x86_64 1.19.16-0 kubernetes 9.0 M

kubelet x86_64 1.19.16-0 kubernetes 20 M

为依赖而更新:

cri-tools x86_64 1.23.0-0 kubernetes 7.1 M

kubernetes-cni x86_64 0.8.7-0 kubernetes 19 M

事务概要

===============================================================================================================================================

升级 3 软件包 (+2 依赖软件包)

总下载量:63 M

Is this ok [y/d/N]: y

Downloading packages:

Delta RPMs disabled because /usr/bin/applydeltarpm not installed.

(1/5): 6e8fc5b12b06a19517237776f07bf8f171fbfec1e0345232ea945264d84790c3-kubeadm-1.19.16-0.x86_64.rpm | 8.3 MB 00:00:27

(2/5): 4d300a7655f56307d35f127d99dc192b6aa4997f322234e754f16aaa60fd8906-cri-tools-1.23.0-0.x86_64.rpm | 7.1 MB 00:00:27

(3/5): 42fa87f24a94214337b896649135fc72dfcd087f6160ec99ae827f877fc20e94-kubectl-1.19.16-0.x86_64.rpm | 9.0 MB 00:00:29

(4/5): 40cf354f26e340ee89801cedfa4333388aa5ff8895ba5dc1645b95a4b56e9c2a-kubelet-1.19.16-0.x86_64.rpm | 20 MB 00:01:18

(5/5): db7cb5cb0b3f6875f54d10f02e625573988e3e91fd4fc5eef0b1876bb18604ad-kubernetes-cni-0.8.7-0.x86_64.rpm | 19 MB 00:01:00

-----------------------------------------------------------------------------------------------------------------------------------------------

总计 542 kB/s | 63 MB 00:01:58

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

正在更新 : kubernetes-cni-0.8.7-0.x86_64 1/10

正在更新 : kubelet-1.19.16-0.x86_64 2/10

正在更新 : cri-tools-1.23.0-0.x86_64 3/10

正在更新 : kubectl-1.19.16-0.x86_64 4/10

正在更新 : kubeadm-1.19.16-0.x86_64 5/10

清理 : kubeadm-1.18.2-0.x86_64 6/10

清理 : kubernetes-cni-0.7.5-0.x86_64 7/10

清理 : kubelet-1.18.2-0.x86_64 8/10

清理 : cri-tools-1.13.0-0.x86_64 9/10

清理 : kubectl-1.18.2-0.x86_64 10/10

验证中 : kubelet-1.19.16-0.x86_64 1/10

验证中 : kubernetes-cni-0.8.7-0.x86_64 2/10

验证中 : kubeadm-1.19.16-0.x86_64 3/10

验证中 : kubectl-1.19.16-0.x86_64 4/10

验证中 : cri-tools-1.23.0-0.x86_64 5/10

验证中 : kubeadm-1.18.2-0.x86_64 6/10

验证中 : kubelet-1.18.2-0.x86_64 7/10

验证中 : cri-tools-1.13.0-0.x86_64 8/10

验证中 : kubernetes-cni-0.7.5-0.x86_64 9/10

验证中 : kubectl-1.18.2-0.x86_64 10/10

更新完毕:

kubeadm.x86_64 0:1.19.16-0 kubectl.x86_64 0:1.19.16-0 kubelet.x86_64 0:1.19.16-0

作为依赖被升级:

cri-tools.x86_64 0:1.23.0-0 kubernetes-cni.x86_64 0:0.8.7-0

完毕!

[root@k8s-node2 ~]# kubeadm upgrade node

[upgrade] Reading configuration from the cluster...

[upgrade] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[preflight] Running pre-flight checks

[preflight] Skipping prepull. Not a control plane node.

[upgrade] Skipping phase. Not a control plane node.

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[upgrade] The configuration for this node was successfully updated!

[upgrade] Now you should go ahead and upgrade the kubelet package using your package manager.

[root@k8s-node2 ~]# systemctl daemon-reload

[root@k8s-node2 ~]# systemctl restart kubelet6.验证升级

kubectl get nodes[root@k8s-master1 manifests]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master1 Ready master 63d v1.19.16

k8s-master2 Ready master 63d v1.19.16

k8s-master3 Ready master 53d v1.19.16

k8s-node1 Ready <none> 63d v1.19.16

k8s-node2 Ready <none> 63d v1.19.16

k8s-node3 Ready <none> 63d v1.19.167. 其他

查看一眼kube-system下插件的日志,确认插件是否正常

kubectl -n kube-system logs -f --tail 100 kube-controller-manager-k8s-master1 [root@k8s-master1 manifests]# kubectl -n kube-system logs -f --tail 100 kube-controller-manager-k8s-master1

Flag --port has been deprecated, see --secure-port instead.

I0524 07:18:08.534563 1 serving.go:331] Generated self-signed cert in-memory

I0524 07:18:08.862673 1 controllermanager.go:175] Version: v1.19.16

I0524 07:18:08.864107 1 secure_serving.go:202] Serving securely on 127.0.0.1:10257

I0524 07:18:08.864171 1 leaderelection.go:243] attempting to acquire leader lease kube-system/kube-controller-manager...

I0524 07:18:08.864571 1 tlsconfig.go:240] Starting DynamicServingCertificateController

I0524 07:18:08.864664 1 dynamic_cafile_content.go:167] Starting request-header::/etc/kubernetes/pki/front-proxy-ca.crt

I0524 07:18:08.864704 1 dynamic_cafile_content.go:167] Starting client-ca-bundle::/etc/kubernetes/pki/ca.crt